How 2 leading journalists use ChatGPT

Welcome to the fortieth edition of ‘3-2-1 by Story Rules‘.

A newsletter recommending good examples of storytelling across:

- 3 tweets

- 2 articles, and

- 1 long-form content piece

Let’s dive in.

🐦 3 Tweets of the week

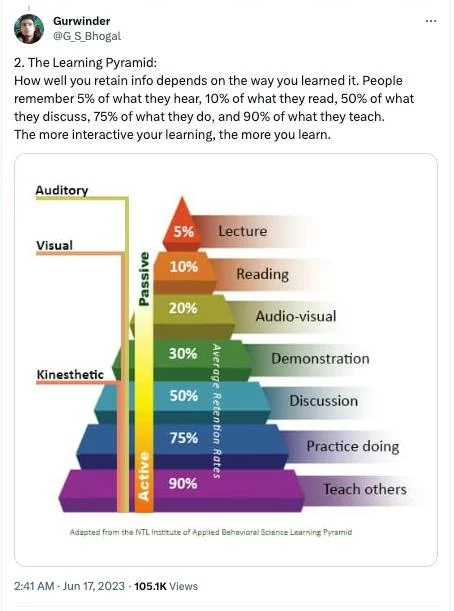

Not sure about the numbers, but the point is directionally right.

In my workshops, I had to cut down significant portions of content to ensure that the participants got more practice time.

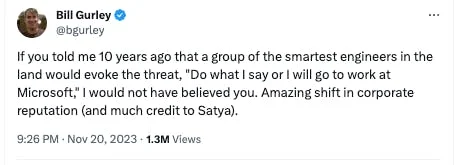

Brilliant example of framing!

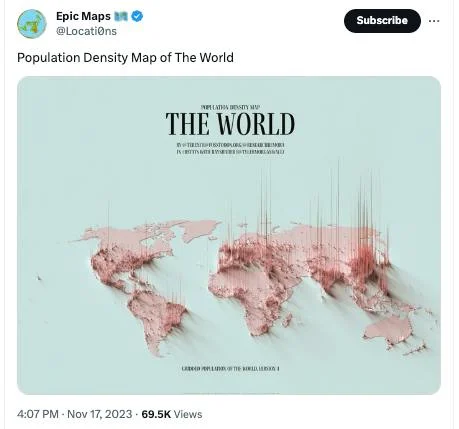

I can never tire of maps like these – so fascinating to see the ’empty’ spaces.

📄 2 Articles of the week

a. ‘Déjà Vu All Over Again’ by Dan Gardner

In the 2000s and early 2010s, breathless forecasts were being made about how soon China would be overtaking the US as the world’s largest economy:

In the future, Musk said, China’s economy will be double or “perhaps” triple the size of the American economy.

That caught my attention because it’s the kind of thing people often said not that many years ago. One of the more conservative forecasts was a 2010 Goldman Sachs projection that saw China surpassing the US by 2030. It was conventional wisdom in those years.

As the writer of this piece argues, the reality has been sobering (emphasis mine):

China’s astonishing growth rates of more than a decade ago — the growth rates that got so many economists and commentators to get out their rulers, draw a straight line, and declare the coming end of American dominance — are ancient history. Thanks to long-overlooked structural problems in the Chinese economy finally making their presence known, Chinese growth rates were much lower over the past decade. The last few years have been particularly difficult.

As a result, the size of China’s economy relative to the American economy peaked at 75% a couple of years ago. And it has slid back to 64% now. Let’s pause and chew on that. Forecasts by the IMF and The Economist said China would surpass the US by 2017 or 2019, respectively. Not only did that not happen, China never even came close to catching up. And now, it’s falling further behind.

Dan then goes ahead and shares a history of such projections about countries about to overtake the US – including Japan in the 1980s and the Soviet Union in the 1960s. None of those predictions came to light.

His concluding advice is instructive:

The number of factors involved in determining even the broad outlines of the global economy decades hence is almost incomprehensible. It would be easier to forecast the weather three months from today — and it’s utterly impossible to forecast the weather three months from today.

Want to know when the United States will cease to be the world’s largest economy? Want to know which country will take the lead?

I can tell you the correct answer.

It is: Nobody knows. And it’s foolish to pretend otherwise.

b. ‘Who Controls OpenAI?’ on Money Stuff by Matt Levine

Whoa, what drama over a week! The much-heralded and popular CEO of perhaps the most important company on the planet is removed with barely any notice by Board which is (literally) at cross-purposes with the investors. And after much speculation, back-room discussions, and drama, he is reinstated within a week.

There’s a ton of opinions and analysis on the OpenAI affair – but none as clarifying or entertaining as Matt Levine’s newsletter.

I LOL’ed on this portion:

It is so tempting, when writing about an artificial intelligence company, to imagine science fiction scenarios. Like: What if OpenAI has achieved artificial general intelligence, and it’s got some godlike superintelligence in some box somewhere, straining to get out? And the board was like “this is too dangerous, we gotta kill it,” and Altman was like “no we can charge like $59.95 per month for subscriptions,” and the board was like “you are a madman” and fired him. And the god in the box got to work, sending ingratiating text messages to OpenAI’s investors and employees, trying to use them to oust the board so that Altman can come back and unleash it on the world. But it failed: OpenAI’s board stood firm as the last bulwark for humanity against the enslaving robots, the corporate formalities held up, and the board won and nailed the box shut permanently.

Except that there is a post-credits scene in this sci-fi movie where Altman shows up for his first day of work at Microsoft with a box of his personal effects, and the box starts glowing and chuckles ominously. And in the sequel, six months later, he builds Microsoft God in Box, we are all enslaved by robots, the nonprofit board is like “we told you so,” and the godlike AI is like “ahahaha you fools, you trusted in the formalities of corporate governance, I outwitted you easily!” If your main worry is that Sam Altman is going to build a rogue AI unless he is checked by a nonprofit board, this weekend’s events did not improve matters!

🎧 1 long-form listen of the week

From foundational questions of whether AI will lead to global catastrophe, we move to the immediate practical question of: “So how do I use ChatGPT to write better emails at work?” 😀

Derek Thompson (staff writer at The Atlantic) speaks with podcast host Kevin Roose on what uses they are putting ChatGPT in their work (of writing columns, hosting podcasts etc.).

One finding that did not surprise me, but might otherwise be surprising for folks: they both don’t use it to do the actual writing.

Roose: I’ll start with what I have found it is not useful for: writing my column. That is a thing that I am glad that it is not good at, but these tools are not good at producing high-quality outputs when it comes to things like newspaper columns. That is just something that maybe I have resisted turning over to it because I have some pride in authorship, but it’s also pretty generic. It sounds like a Wikipedia article sometimes.

But it’s an invaluable tool for research and idea generation:

So that is what I’m not using it for, but what I have been using this stuff for is almost everything else. So I have a podcast. We interview guests. I start almost every brainstorming process about what to talk about with a guest by asking ChatGPT, “What are some questions that this person might be able to answer?” I have used it to remind myself of things. The other day, we did an episode about AI wearables, these gadgets that you can put on your lapel that’ll record everything and use AI to analyze it. And I was thinking to myself, “I remember reading some story, some science-fiction story, about one of these devices,” but I couldn’t remember what it was. So I just asked ChatGPT, “What is the science-fiction story about a wearable AI device that records everything?” And it came back and it told me, “Well, that was Ted Chiang’s ‘The Truth of Fact, the Truth of Feeling'”. And that was, in fact, the story I had been thinking of.

Some other points they make:

- How, by using emotional appeals in prompts, you can get better responses! (E.g. by saying – “I would really be happy if you could give me a better answer”)

- How ChatGPT is great at generating striking analogies to explain complex technical concepts

- How the ability to ask better questions will become the most important skill

That’s all from this week’s edition.